Final Year Project & Dissertation

Cambridge Part II (2016-17)This large final-year individual project required me to write a large amount of C#.NET and WPF code, before writing up my findings as a dissertation. I implemented a multi-threaded feature computer which extracted both high-level features (using a graph-based image segmentation algorithm) and low-level features from a folder of images, as well as two different user interfaces for sorting them.

Below is an extract from my dissertation that introduces the problem addressed in the project. To see the full 12,000 word dissertation, click here [PDF, 10MB].

This project has roots in the field of aesthetic image quality analysis, a subtopic of Computer Vision concerned with evaluating how beautiful images are to humans. The task addressed by the project is a specific one within this underlying field, with emphasis on leaving the subjectivity with the humans that create it.

Arguably the “holy grail” problem of Computer Vision is that of general object recognition. It is something that humans do with minimal effort and yet we have little cognitive penetrance of the task. We use past experiences to recognise or try to understand the salient objects and scenes, and use multiple contexts to form an aesthetic opinion. Therefore it makes sense to mimic this object recognition process when trying to automate the analysis of “image quality”. However, since general object recognition is hypothesised to be AI-complete, it must be approximated. This project performs such an approximation by segmenting the image and uses the resulting segmentation to analyse properties of the image subjects.

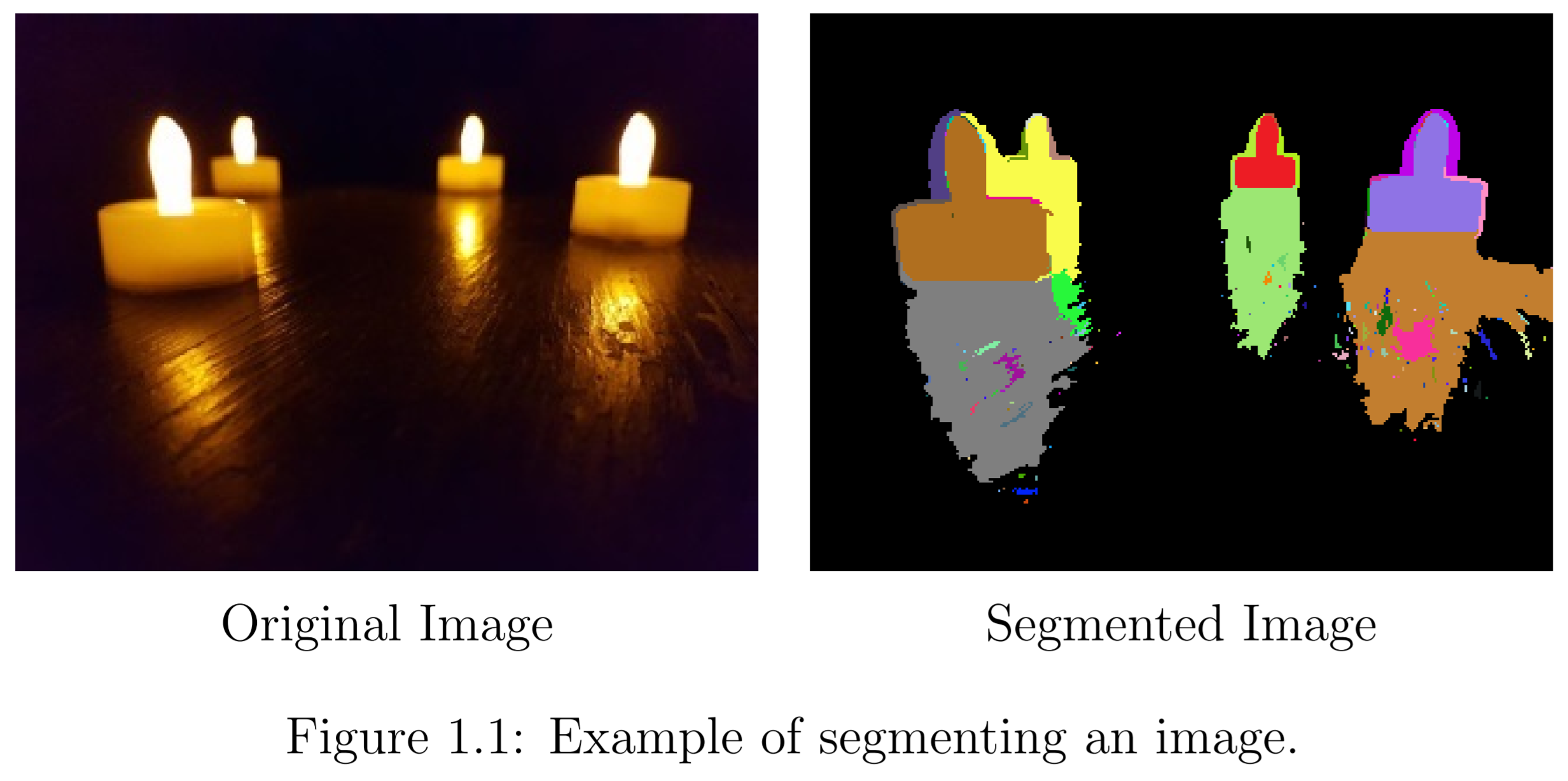

Segmentation is a non-linear operation that extracts meaningful symbols corresponding to the structures and objects in an image. Figure 1.1 shows an example of this abstraction. Humans see an image rich in meaning, whereas computers have only raw data. Segmentation takes this raw data and automatically approximates the high-level symbols.

Throughout this dissertation there is a distinction between “high-level” and “low-level” image properties. High-level properties are those that incorporate top-down contextual information about the image. A feature which is derived from an image’s segmentation is classed as high-level since it has access to approximations for the positions, sizes, and colours of different objects in the image. Conversely, low-level properties are relatively simple and operate on a pixel-by-pixel basis. They infer global characteristics of the image using linear transformations on the pixel data.

High-level features correspond to human analysis of the salient objects. They allow extraction of information about complicated things like scene composition. This is not to say that humans do not consider ideas similar to low-level features when judging image quality. For example, a human certainly could dislike an image because it is too bright. A combination of the two types should be needed to capture more about the aesthetic image quality.